Enterprise AI Engineering Platform — Local-first Memory + Governance

Model-agnostic workflows with inspectable memory. Use your own LLM subscriptions and keep data on your machine.

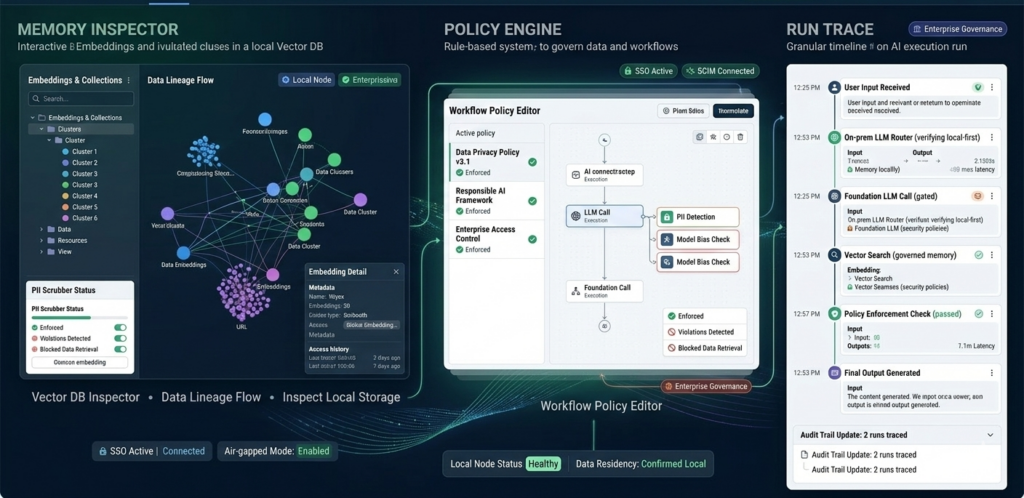

Nyex is a local-first AI orchestration and governance platform that lets teams safely operate multiple LLMs with shared memory, auditability, and enterprise controls — without owning model compute.

- Data stays local

- Token savings via on-prem processing

- Governed, inspectable memory

- Windows / macOS / Linux

- Works with OpenAI / Anthropic / Azure / Local LLM

- Data stays local

- Token savings via on-prem processing

- Governed, inspectable memory

How It Works

- Download platform bundle

- Install Node.js + runtime

- Verify signed installer

- Add your foundation LLM subscription

- Install small on-prem LLM

- Route tasks intelligently

Differentiators

- Download platform bundle

- Install Node.js + runtime

- Verify signed installer

- Add your foundation LLM subscription

- Install small on-prem LLM

- Route tasks intelligently

Security & Trust

- Local-first data handling

- Signed builds + integrity verification

- Policy engine enforcement

- Audit trails + traceability

- Optional certified distribution + LTS

- Security Overview

- Architecture Diagram

- Responsible Model Use

- Subprocessor list

Learn the Platform in 20 Minutes

Enter your details to unlock the full video library and download links.

Watch video

Watch video

Watch video

Watch video

Download Section

- OS selection dropdown (Windows/macOS/Linux)

- Version number + release date

- SHA-256 checksum + “Verify” instructions

- “Signed by …” certificate note

- OS selection dropdown (Windows/macOS/Linux)

- Version number + release date

- SHA-256 checksum + “Verify” instructions

- “Signed by …” certificate note

- OS selection dropdown (Windows/macOS/Linux)

- Version number + release date

- SHA-256 checksum + “Verify” instructions

- “Signed by …” certificate note

- OS selection dropdown (Windows/macOS/Linux)

- Version number + release date

- SHA-256 checksum + “Verify” instructions

- “Signed by …” certificate note

Own Your AI. Control Your Costs. Keep Your Data.

GO (First 3 Months FREE)

Deploy your Al agents locally and experience how a digital workforce can 5X your productivity and scale faster - for FREE!

- NYEX Al Core Infrastructure

- 1 Device

- Foundation Model Routing

- Local Model Stack

- Orchestration Agents

- Persistent Local Memory

- (20,000 Token Limit - Resets every 6 hours

- Model Context Protocol (MCP)

- Connectors

PRO BUSINESS

Optimized for individual devs, Independent consultant, Attorney Compliance specialist, Creator, Technical founder.

- NYEX Al Core Infrastructure

- Up to 2 Devices

- Foundation Model Routing

- Local Model Stack

- Orchestration Agents

- Persistent Local Memory

- Email Support

- Unlimited

STRIKE TEAM

Optimized for product teams, Revenue-generating agency, Technical consultancy, Fast-scaling startup, Professional services firm

- NYEX Al Core Infrastructure

- Up to 6 Devices

- Foundation Model Routing

- Local Model Stack

- Orchestration Agents

- Persistent Local Memory

- RBAC - Role based access control (Advanced)

- Shared Local Memory Across Team

- Tool Bus Engine

- MCP Studio Adapter, HTTP Transport Inbound & Outbound

- Policy As Code

- Certified Build (Addon)

- Email Support (Priority)

- Hypercare Enterprise Support SLA (Business Hours)

- Unlimited

ENTERPRISE

Optimized for enterprise transformation and adoption, compliance, quality gates, deployment controls, SLAs.

- NYEX Al Core Infrastructure

- Devices - Enterprise Wide

- Digital Transformation with Al Infrastructure

- Foundation Model Routing

- Local Model Stack

- Orchestration Agents

- Persistent Local Memory

- Shared Local Memory Across Team

- Tool Bus Engine

- MCP Studio Adapter, HTTP Transport Inbound & Outbound

- RBAC - Role based access control (Advanced)

- SSO

- Policy As Code (Advanced Governance)

- Governance & Audit Logs (Full + Evidence)

- Certified Build

- Security Framework

- Email Support (Priority + 24/7)

- Hypercare Enterprise Support SLA (Contractual SLA)

- Dedicated Success Engineer

Contact + Enterprise Inquiry

Quick contact

- Sales email

- Security email (security@)

- Support portal link

- Schedule a call

AI Questions & Answers

Explore common questions to better understand how our AI services work, their benefits, and how they can be tailored to your business needs.

Does any of our data get sent to your servers?

If you connect to a foundation LLM (like OpenAI, Anthropic, Azure, etc.), data is only sent to that provider — under your own subscription and policies — not through us.

We do not host, store, or process your engineering data in the cloud.

How does token cost reduction actually work?

● Routine or structured tasks run on a local on-prem LLM.

● Higher-quality foundation models are used only when necessary.

● Persistent vector memory ensures only relevant context is retrieved, reducing unnecessary token load.

You use your own LLM subscriptions, so you only pay for what you consume — and the system minimizes unnecessary external calls.

What does “persistent memory” mean in practice?

Our platform stores structured memory in a local vector database so that:

● Knowledge persists across runs

● Context improves over time

● Memory entries can be inspected, edited, approved, or deprecated

● Workflows become stable and repeatable

This turns AI from “chat sessions” into a governed engineering system.

Is this tied to a specific LLM provider?

You can:

● Use OpenAI, Anthropic, Azure, or other foundation models

● Use a small on-prem LLM for local processing

● Switch providers without rebuilding your workflows

This prevents vendor lock-in and keeps your architecture flexible.

Is this suitable for enterprise or regulated environments?

Because data and memory are stored locally, the platform supports:

● Stronger data control

● Reduced cloud exposure risk

● Air-gapped deployment options

● Policy enforcement and audit tracing

● SSO and role-based access control (Enterprise tier)

This significantly simplifies enterprise security reviews.

Do we need a large engineering team to use this?

The platform is designed to reduce manual orchestration and repetitive AI setup.

It includes:

● Reusable workflows

● Built-in evaluation and replay

● Automated routing between models

● Governance and audit logging

Small teams can deliver production-grade AI systems without building complex infrastructure from scratch.

What kind of hardware is required to run the local LLM?

For small on-prem LLMs used for routine processing, a modern machine with sufficient RAM is typically adequate.

Foundation models still run through your existing cloud subscription when higher-quality output is required.

You can scale your local model capacity based on your needs.

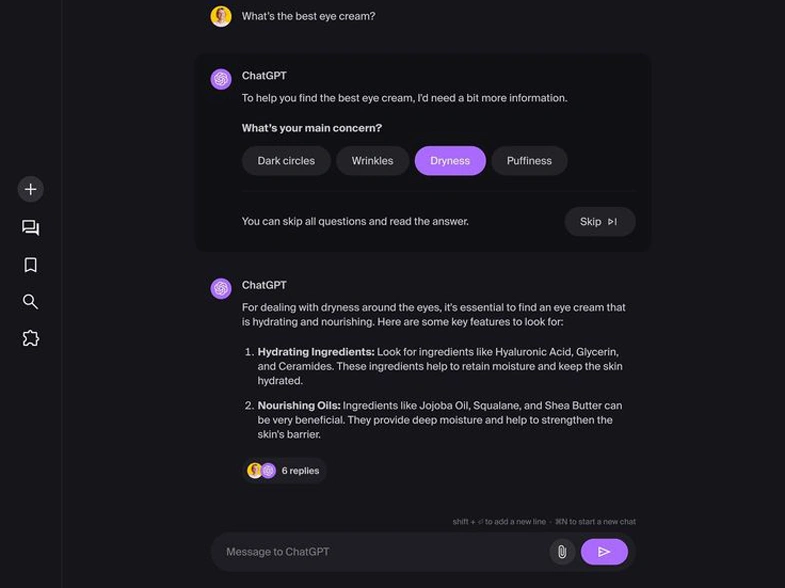

How is this different from using prompts in ChatGPT or other AI tools?

This platform is built for engineering systems:

● Persistent memory

● Inspectable vector storage

● Replayable executions

● Governance and policy controls

● Multi-step workflow orchestration

● Enterprise auditability

It moves AI from experimentation to structured, repeatable, and governed production workflows.

How Does Licensing Work If Everything Runs Locally?

a. License / Subscription for NYEX (The Platform)

This license gives you the right to use the NYEX AI Engineering Platform itself.

What the NYEX license controls:

● Access to the platform software

● Feature tiers (Free / Pro / Team / Enterprise)

● Governance capabilities (policy engine, approvals, audit exports)

● Collaboration features (multi-user workspaces)

● Enterprise controls (SSO, RBAC, compliance modules)

● Certified builds and enterprise distribution (if applicable)

● Software updates and support

What the NYEX license does NOT include:

● LLM token usage

● Foundation model subscription

● Cloud AI costs

● Local hardware costs

● The NYEX license governs the engineering infrastructure layer, not the AI compute layer.

b. License / Subscription for the LLM Provider

This is completely separate.

You bring your own:

● OpenAI subscription

● Anthropic subscription

● Azure OpenAI subscription

● Or any other foundation model provider

You pay those providers directly for:

● Token usage

● Model access

● API calls

● Any cloud compute involved

NYEX does not resell tokens and does not add markup to your LLM costs.

This means:

● You pay only for what you consume.

● You maintain full control over your LLM billing.

● You can switch providers without changing your NYEX license.

c. Local LLM Usage

If you use a small on-prem LLM:

You do not pay per token.

Costs are limited to your own hardware.

NYEX simply orchestrates and routes tasks to it.

NYEX provides the orchestration and governance layer — not the model license.

Why This Separation Matters

1. Cost Transparency

You see exactly what you’re paying for:

● Platform capability (NYEX)

● AI compute usage (LLM provider)

2. No Vendor Lock-in

You can:

● Change model providers

● Adjust usage patterns

● Control token consumption

Without affecting your NYEX license.

3. Enterprise Procurement Simplicity

Enterprises often:

● Already have LLM provider agreements

● Already have cloud contracts

● Already have model governance policies

NYEX integrates into those existing agreements rather than replacing them.